Apple's last tower topples… and the others will follow

Apple has discontinued the Mac Pro – but it's just the first of the tower computers to go. The rest will follow soon.Fruit-sniffers extraordaire 9-to-5 Mac got the news yesterday, complete with official confirmation from Apple itself. It's official and it's happened, but there have been warning signs for months – in November 2025, Bloomberg's Matt Gurman said "The Mac Pro is on the back burner."

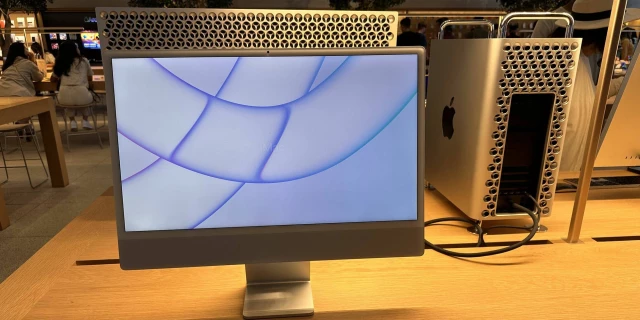

The phantom fruit-flingers of Silicon Valley launched the seven-thousand-buck Apple Silicon-based Mac Pro in June 2023, with an M2 Ultra SoC. It sported seven PCIe slots – but the problem was that cash-rich customers couldn't add the sorts of expansion that normally go into a PCIe slot… to the extent that Apple publishes a page about PCIe cards you can install in your Mac Pro (2023). Notably, the machine did not support add-on GPUs: only the GPU that's integrated into the CPU complex along with the machine's RAM and primary flash storage. The machine also had no RAM expansion whatsoever.

Presumably, this limited its appeal for many traditional buyers, and the machine never saw an M3 or M4 model, let alone the M5 SoC that The Register covered shortly before Bloomberg called the Arm64 cheesegrater's fate.

Apple's last tower topples… and the others will follow

: Farewell, Mac Pro: Increasing integration means the end of expandable computersLiam Proven (The Register)

like this

Lasslinthar, AGuyAcrossTheInternet and joshg253 like this.

realitista

in reply to Powderhorn • • •like this

Lasslinthar and qupada like this.

qupada

in reply to realitista • • •The interesting thing is the people who will care the most about this are professional users, who actually did require a machine with real expandability, to stuff full of the likes of SDI video IO cards (eg aja.com/products/kona-5).

If you ask those people, they'll undoubtedly gladly tell you how much it sucked dealing with Thunderbolt-to-PCIe expansion cages during the "Trashcan" era in order to use their machine for their work.

While Thunderbolt's throughput has certainly improved a bunch since then (80Gbps symmetrical or 120/40Gbps asymmetrical for TB5, vs 20Gbps for TB2 back in that era), latency and stability still frankly leave a lot to be desired versus a real PCIe slot.

For people who already perceive Apple devices as overpriced toy computers, their further alienating what was at one point their primary target audience - high-end professional users - will certainly seem like an odd choice.

KONA 5 8-lane PCIe 3.0 I/O card

www.aja.comartyom

in reply to Powderhorn • • •Honestly I'm shocked desktop PCs have lasted this long.

That being said, PC gaming is a growing trend, not shrinking, so I suspect there will continue to be at least some availability in the future for those components?

Additionally, while Macs are really great at some workloads, they're still inferior in others to existing desktop machines with dedicated GPUs, and the closest competitor from Apple will still cost at least twice as much.

Kairos

in reply to artyom • • •Desktop PCs are so much more powerful and fast than laptops of the same spec. Not to mention cheaper.

High integration on laptops decreases space and cost by wildly increasing battery life for the same battery

artyom

in reply to Kairos • • •Kairos

in reply to artyom • • •Integrated processors let laptops be faster without also using power. Strictly speaking it'd be cheaper to just use a faster CPU but battery life is more important than cost so lots of money is spent on integrating processors.

Desktops are still around because they're upgradable and faster than their laptop brothers.

artyom

in reply to Kairos • • •Kairos

in reply to artyom • • •An AIO is effectively a laptop without a keyboard. They're functionally very similar (appealing to less power-hungry users). They're just less mobile.

Presumably it's cheaper for apple to just put the integrated CPUs in everything because it'd be expensive to make another model.

I garuntee you this trade off only makes sense for Apple. Other AIOs don't always have the new laptop chips from Intel because it makes more sense to use the desktop one with all the space they have.

artyom

in reply to Kairos • • •Kairos

in reply to artyom • • •I get what you mean. What I'm trying to say is that desktop/non integrated CPUs are cheaper and this cost savings continues into a large form factor. Apple doesn't put a desktop chip in their iMacs because they don't make one. That's not what their customer base needs. If they did it'd be 4x faster for the same price.

And these arm chips are slower than x86. X86 is so much faster at least for single core performance which matters a LOT more for desktop use cases

artyom

in reply to Kairos • • •- YouTube

youtu.beKairos

in reply to artyom • • •artyom

in reply to Kairos • • •Kairos

in reply to artyom • • •artyom

in reply to Kairos • • •Kairos

in reply to artyom • • •Which again would be cheaper if they put the chips in separate enclosures. Just way bigger and more power usage.

Macs are good at video editing because Apple actually givesa shit about hardware encoding. NVENC is the only competitor. Everything else is shit.

tal

in reply to artyom • • •I'm not sold that modular desktops are going away in general. SoCs have some benefits in terms of power usage, but those are most-substantial on phones and least-substantial on the desktop.

My understanding is that memory may move away from DIMMs to CAMM2 to permit for higher speeds, but that's still a modular system.

type of integrated circuit; integration of the functions of a system on a chip

Contributors to Wikimedia projects (Wikimedia Foundation, Inc.)artyom

in reply to tal • • •tal

in reply to artyom • • •Clent

in reply to tal • • •like this

clove likes this.

CompactFlax

in reply to artyom • • •Wait until we see the 2026 stats for hardware sales. 📉

Though I think the supply issues will hurt consoles just as much.

circuitfarmer

in reply to Powderhorn • • •I'm not understanding the logic here. Apple killed their last tower. That isn't surprising, and their user base is perfectly happy buying nothing but SOCs.

Then there is a still-expanding PC gaming market, where building the machine from discrete parts is a portion of the hobby. By and large, this has never really overlapped with Apple's user base.

The article does a poor job saying why we should expect non-Apple machines to go the same direction.

like this

Lasslinthar, AGuyAcrossTheInternet, rash and clove like this.

artyom

in reply to circuitfarmer • • •They already are. Increased speed and efficiency are solid reasons. The Mac Pro was absolutely enormous in comparison to the new Mac Studio, which absolutely blows it away in terms of performance, while being a lot cheaper. Strix Halo is a great example of similar benefits on the PC front.

The vast majority of PCs aren't sold to hobbyists. Gamers mostly benefit from the existence of other markets that they can sell these chips too. If those go, these chips get taken off the market.

tal

in reply to Powderhorn • • •Discrete disk controllers are still around.

My last desktop had a PCI SATA card that I added after I exhausted all of the on-motherboard SATA slots.

My current one has a JBOD SATA USB Mass Storage enclosure.

SharkAttak

in reply to tal • • •tal

in reply to SharkAttak • • •masterspace

in reply to Powderhorn • • •This is a bad article. It's just an Apple fanboy watching their company continue its trend of shitting on customers and assuming that everyone inevitably will, apparently never once reflecting on whether their insistence of sticking with Apple is the real problem.

Their argument boils down to CPUs increasingly integrating basic versions of other components over time meaning that desktops will disappear... Ignoring that the desktop market has stayed surprisingly flat that entire time and has certainly not disappeared.

If your argument is that integrated CPUs will outclass discrete components connected with high speed buses then you need to make it from an engineering standpoint, not a headline one.

I also don't understand his reasoning that because NVidia don't buy ARM they don't get to make an integrated CPU.... Nvidia made and sold an integrated ARM CPU before ever being rumoured to buy them, and they still make and sell it to this day ... because ARM's entire business model is based on companies like Nvidia licensing their designs.

like this

rash, Quantumantics and clove like this.

tal

in reply to masterspace • • •checks article history

Almost all of their articles are about Linux.

Powderhorn

in reply to tal • • •masterspace

in reply to tal • • •And what hardware do they run Linux on? And what phone do they use? And what TV device?

And if they're not a mac fan boy, then they're insistence of looking at Apple as the only possible sign of industry trends is mind boggingly narrow.

Powderhorn

in reply to masterspace • • •It's an opinion piece. I don't agree with all of it, either.

This said, do you really miss having a northbridge and southbridge?

masterspace

in reply to Powderhorn • • •Do I really miss it? It never once came up in any practical situation.

You would buy a mobo and a CPU and put them together and not think about the specific buses or controllers you have available, unless you had a very specific reason to.

Unless we're talking about a mobile power constrained device, I certainly would rather have expandable RAM and graphics cards then everything slammed in a single unchanging chip.

And again, the fact that the author states that Nvidia can't release an integrated SoC because they didn't buy ARM, when they actively sell an integrated SoC licensed from ARM, makes the entire rest of their "opinion", untrustworthy.