Telling an AI model that it's an expert makes it worse

Many people start their work with AI by prompting the machine to imagine it is an expert at the task they want it to perform, a technique that boffins have found may be futile.Persona-based prompting – which involves using directives such as "You're an expert machine learning programmer" in a model prompt – dates back to 2023, when researchers began to explore how role-playing instructions influenced AI models’ output.

It's now common to find online prompting guides that include passages like, "You are an expert full-stack developer tasked with building a complete, production-ready full-stack web application from scratch."

But academics who have researched this approach report it does not always produce superior results.

In a pre-print paper titled "Expert Personas Improve LLM Alignment but Damage Accuracy: Bootstrapping Intent-Based Persona Routing with PRISM," researchers affiliated with the University of Southern California (USC) find that persona-based prompting is task-dependent – which they say explains the mixed results.

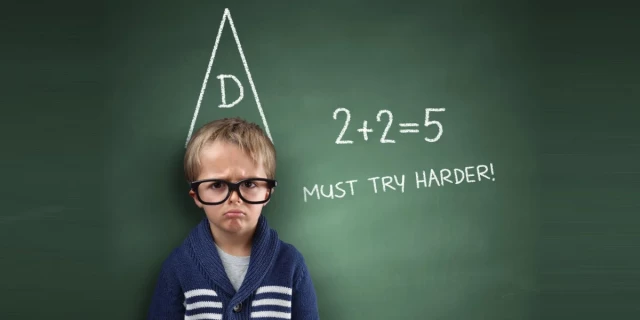

For alignment-dependent tasks, like writing, role-playing, and safety, personas do improve model performance. For pretraining-dependent tasks like math and coding, using the technique produces worse results.

Telling an AI model that it’s an expert programmer makes it a worse programmer

: Researchers say persona-based prompting can improve works for safety but not for factsThomas Claburn (The Register)

CodexArcanum

in reply to Powderhorn • • •Makes sense if you consider how LLMs work. No expert starts off a piece of writing with "I am an expert in math, here is a proof of Fermat's Last Theorem."

You'd get better results prompting it for a style of document. Instead of "you are a screenwriter" just start it off "Script for Harry Potter Goes for Surf n TERF. Scene 1: The Sweaty Troll, a wizard gay bar" and then let it run from there.

CanadaPlus

in reply to CodexArcanum • • •JohnEdwa

in reply to CodexArcanum • • •