Search

Items tagged with: aied

Some recent #AIEd articles:

* PromptDecipher: AI Tutor Authoring Through Editable Simulated Interactions arxiv.org/abs/2605.16605 Source code: anonymous.4open.science/r/teac…

* Tutoring Agents Struggle Where Feedback Matters Most arxiv.org/abs/2605.16207v1

* Modeling AI-TPACK in Practice arxiv.org/abs/2605.13906

* Validating AI-Generated Classroom Observations sciencedirect.com/science/arti…

* Simulating Students or Sycophantic Problem Solving? arxiv.org/abs/2605.12748

#EdTech

* PromptDecipher: AI Tutor Authoring Through Editable Simulated Interactions arxiv.org/abs/2605.16605 Source code: anonymous.4open.science/r/teac…

* Tutoring Agents Struggle Where Feedback Matters Most arxiv.org/abs/2605.16207v1

* Modeling AI-TPACK in Practice arxiv.org/abs/2605.13906

* Validating AI-Generated Classroom Observations sciencedirect.com/science/arti…

* Simulating Students or Sycophantic Problem Solving? arxiv.org/abs/2605.12748

#EdTech

Simulating Students or Sycophantic Problem Solving? On Misconception Faithfulness of LLM Simulators

Large language models (LLMs) can fluently generate student-like responses, making them attractive as simulated students for training and evaluating AI tutors and human educators.arXiv.org

Self-reported measures (surveys) are often not correlated or even negatively correlated w/more objective measures (such as observations, scenario/performance assessments). Examples:

* Teacher AI literacy arxiv.org/abs/2601.06101

* Applying professional development to the classroom academic.oup.com/bioscience/ar…

* AI cognitive offloading goedel.io/p/the-machine-that-s…

* Student learning from teaching pnas.org/doi/10.1073/pnas.1821…

* And grades link.springer.com/article/10.1…

* TPACK osf.io/preprints/psyarxiv/bhqx…

#EdDev #AIEd

* Teacher AI literacy arxiv.org/abs/2601.06101

* Applying professional development to the classroom academic.oup.com/bioscience/ar…

* AI cognitive offloading goedel.io/p/the-machine-that-s…

* Student learning from teaching pnas.org/doi/10.1073/pnas.1821…

* And grades link.springer.com/article/10.1…

* TPACK osf.io/preprints/psyarxiv/bhqx…

#EdDev #AIEd

The Machine That Stops You From Thinking

How AI is quietly outsourcing your cognition — and why you won’t notice until it’s too lateAlexander Rink (Gödel's)

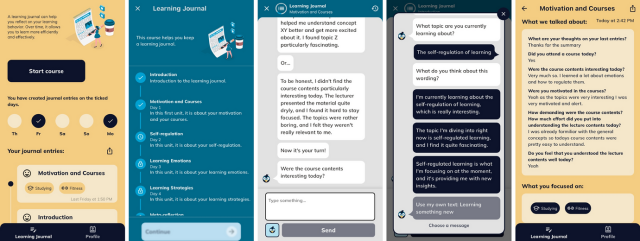

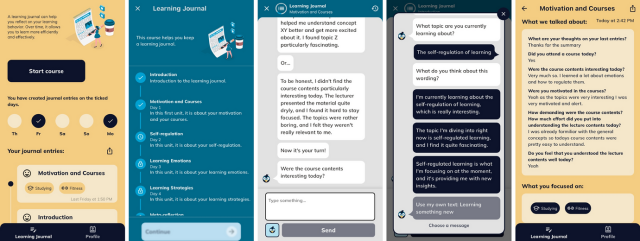

Designing a mobile chatbot-based learning journaling system for intrinsic motivation and engagement

link.springer.com/article/10.1…

#AIEd #Education #EdTech

link.springer.com/article/10.1…

#AIEd #Education #EdTech

Designing a mobile chatbot-based learning journaling system for intrinsic motivation and engagement - International Journal of Educational Technology in Higher Education

Journaling enables students to reflect on their learning processes and thereby strengthen their self-regulation, a key competency for meeting academic goalSpringerLink