Search

Items tagged with: gemma4

Every few months someone announces a model you can “run locally” and every few months the fine print tells the same story. You need 80GB of VRAM. Or a server.

Gemma 4 is different. Not because Google said so. Because of 3.8 billion active parameters inside a 26 billion parameter model. The short version is that for the first time, running a genuinely capable AI agent on a consumer GPU is not a compromise.

firethering.com/gemma-4-local-…

#gemma4 #ai #aiagent #google #trending #opensource

Gemma 4 Makes Local AI Agents Actually Practical - Firethering

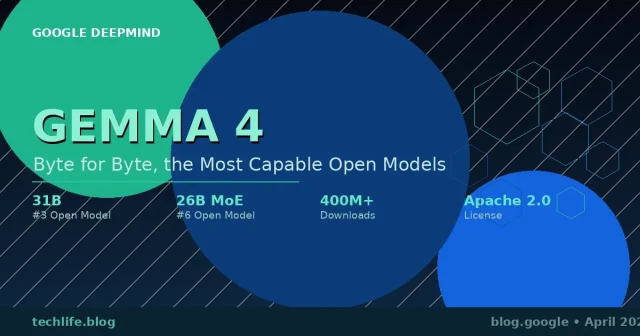

Gemma 4 is a family of four models. Two dense models built for phones and laptops, E2B and E4B. One MoE model at 26B A4B for consumer GPUs. One dense 31B for workstations and servers. All four are multimodal.Mohit Geryani (Firethering)

winbuzzer.com/2026/04/03/googl…

Google Releases Gemma 4 Open Models Under Apache 2.0 License

#AI #Google #Gemma4 #OpenSourceAI #GoogleDeepMind #LLMs #GoogleCloud #Alphabet #AIModels

Google Releases Gemma 4 Open Models Under Apache 2.0 License

Google has released Gemma 4, a family of four open-weight AI models under Apache 2.0, with edge-to-workstation variants built on Gemini 3 technology.Markus Kasanmascheff (WinBuzzer)

Gemma 4: Google's Most Capable Open Models Are Here — and They Run on Your Laptop

techlife.blog/posts/gemma-4-go…

#Gemma4 #GoogleAI #OpenSourceAI #LLM #OnDeviceAI #MixtureOfExperts

Gemma 4: Google's Most Capable Open Models Are Here — and They Run on Your Laptop

Google's Gemma 4 family brings frontier-level AI reasoning to devices ranging from Android phones to developer workstations, under a fully open Apache 2.0 license.Turker Senturk (TechLife — AI, Software Engineering & Emerging Technology)